Regulators urged to monitor the AI power struggle closely for potential risks

Dr. Tiberio Caetano, co-founder and chief scientist of Gradient Institute, warns that regulators need to start paying attention to anyone using vast amounts of computer power in machine learning to prevent “bad actors” from creating harmful new AI systems.

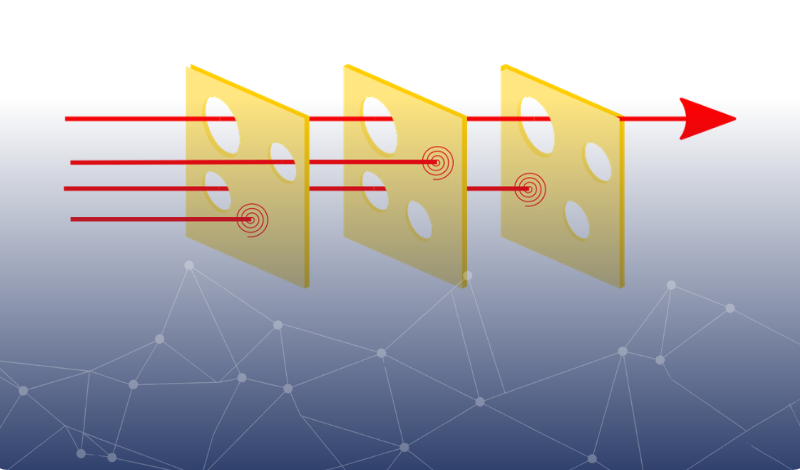

While regulators focus on the algorithms and data used in machine learning, not enough attention is paid to the role of computing power in unlocking AI capability.

Dr. Caetano explains that increasing the size or compute of these models results in them acquiring qualitatively new capabilities. He says that while discussions about regulating AI development are well under way, most proposals have only focused on the algorithms and data used for machine learning, and that compute needs to be added to the regulatory mix.

Read the full AFR article here.